Rethinking Orchestration in Databricks Architectures

How modern Databricks capabilities are reshaping traditional Azure data platform architectures

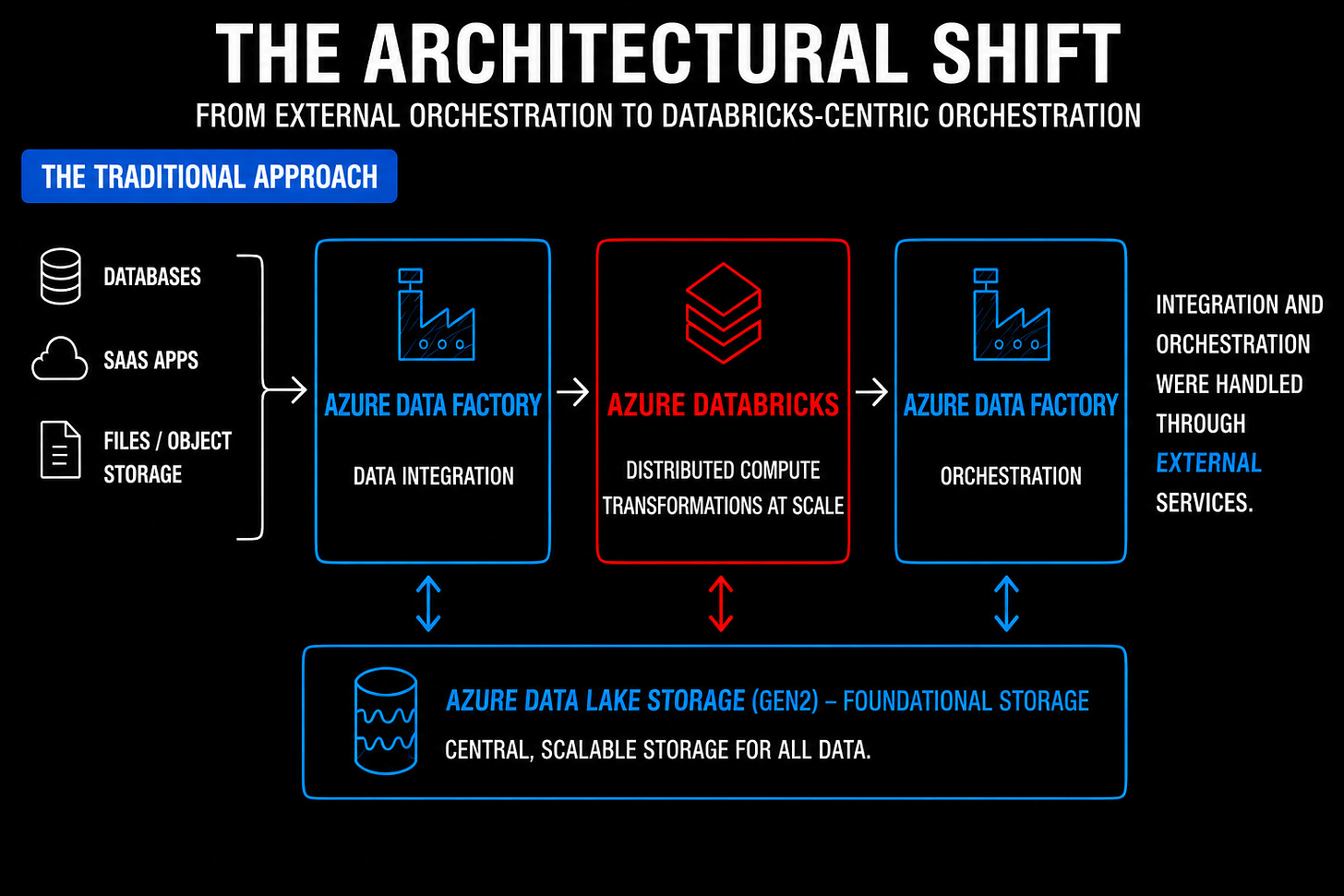

For many years, a typical Azure data platform architecture looked something like this:

Azure Data Factory (ADF) for data integration and orchestration

Azure Databricks for compute and transformation

Azure Data Lake Storage Gen2 for storage

This architecture became so common that many teams stopped questioning it.

If you were building a modern data platform on Azure, ADF was often treated as a foundational part of the solution. ADF handled orchestration and enterprise integration across systems, while Databricks focused primarily on distributed data processing and ETL workloads using Apache Spark.

In many environments, the separation of responsibilities looked something like this:

ADF integrates and orchestrates.

Databricks computes and transforms.

And to be fair, this approach made complete sense at the time.

Earlier versions of Databricks workflows (aka Lakeflow jobs) were far less mature than what we have today, and the platform itself did not provide the mature orchestration and enterprise integration capabilities that ADF already offered.

For a long time, this was not just a popular architecture pattern. It was often the most practical one.

But over the last few years, something important has changed.

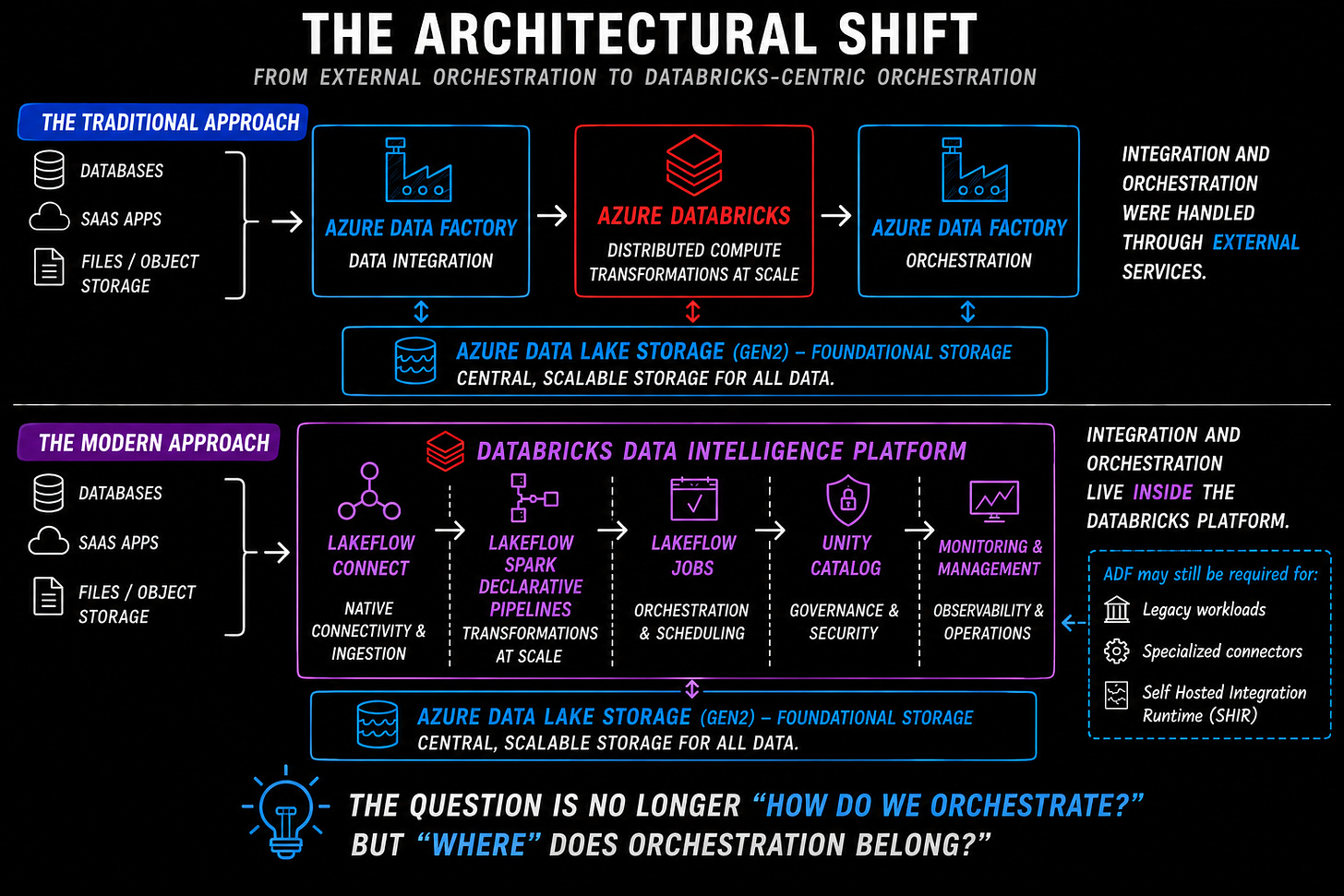

Databricks capabilities have expanded significantly beyond traditional Spark processing workloads. The platform now includes increasingly mature native capabilities for orchestration, ingestion, governance, monitoring, and event-driven execution.

Features such as Lakeflow Connect, Lakeflow Spark Declarative Pipelines, Lakeflow Jobs, and Unity Catalog integration are gradually reshaping how modern lakehouse architectures are designed.

As Databricks continues expanding beyond compute into workflow orchestration and platform operations, the assumption that orchestration and integration must always live outside the platform is beginning to change.

That does not mean Azure Data Factory is obsolete. Far from it.

ADF still provides significant value in many enterprise environments, especially when dealing with hybrid and on-premises integration, extensive enterprise connector support, and orchestration across multiple Azure services and enterprise systems.

Similarly, Apache Airflow continues to play an important role in engineering-heavy organizations that require orchestration-as-code, platform-neutral workflows, dynamic workflow patterns, and multi-platform coordination.

But the architectural conversation is changing.

Increasingly, teams are now asking a different question:

Do orchestration and integration still need to live outside the data platform by default?

And that shift is worth paying attention to.

Note: While this article primarily references Azure Data Factory (ADF), Microsoft increasingly positions Data Factory within Microsoft Fabric as the preferred direction for many newer data engineering projects. Many of the broader orchestration and integration considerations discussed for using ADF in this article also apply to Fabric Data Factory.

Why External Orchestration Became the Default?

The widespread adoption of external orchestration was largely driven by enterprise operational requirements.

Organisations needed far more than just distributed compute for Spark workloads. They also needed centralised scheduling, operational monitoring, retries, dependency management, alerting, governance, and integration across a growing number of systems and services.

Earlier versions of Databricks did not provide many of these capabilities natively. ADF, on the other hand, already offered mature orchestration and enterprise integration capabilities that aligned naturally with enterprise operational requirements.

This made ADF a natural fit for many enterprise architectures, particularly in environments involving on-premises systems, legacy databases, SaaS applications, APIs, file-based integrations, and multiple downstream platforms.

Capabilities such as orchestration across Azure services, extensive enterprise connector support, and hybrid integration through Self Hosted Integration Runtime (SHIR) allowed organisations to integrate cloud and on-premises systems through a centralised operational layer.

In many enterprises, Databricks became one component inside a much larger operational ecosystem. A typical workflow might involve ingesting data from enterprise systems, coordinating dependencies across multiple services, triggering Databricks notebooks or jobs, and orchestrating downstream operational processes.

The reason the conversation is changing now is not because enterprises no longer need orchestration, integration, monitoring, governance, or operational coordination across systems.

It is because the capabilities inside Databricks have evolved significantly.

What Changed Inside Databricks?

Over the last few years, Databricks has introduced increasingly mature native capabilities for orchestration, ingestion, governance, and operational management.

One of the biggest drivers behind this shift has been the evolution of Lakeflow Jobs.

What started as relatively lightweight job scheduling has gradually evolved into a much more capable orchestration layer supporting task dependencies, retries, notifications, conditional execution, triggers, scheduling, and orchestration across notebooks, SQL, Python, and pipelines.

For many Databricks-centric workloads, these capabilities significantly reduce the need for a separate external orchestration layer.

At the same time, other platform capabilities have continued maturing around it.

Lakeflow Connect expands native ingestion capabilities within the Databricks ecosystem through managed connectors and ingestion support for selected SaaS platforms, databases, and streaming sources.

Lakeflow Spark Declarative Pipelines reduce the operational overhead of building and maintaining data pipelines by providing a more managed and declarative pipeline framework.

Unity Catalog centralizes governance, permissions, lineage, and data discovery across workloads.

Together, these capabilities increasingly allow teams to build and operate data platforms more natively within Databricks itself.

Conceptually, the architectural shift increasingly looks something like this:

When External Orchestration Still Makes Sense?

Despite these changes, external orchestration tools still play an important role in many enterprise environments.

This is not a situation where one approach completely replaces another. While Databricks native orchestration capabilities have matured significantly, tools like ADF continue to solve important problems that often extend beyond a Databricks-centric architecture.

ADF still provides strong advantages in areas such as

hybrid and on-premises integration

broader enterprise connector support

orchestration across multiple Azure services and enterprise systems

capabilities such as Self Hosted Integration Runtime (SHIR) for securely connecting to private network and on-premises environments.

This becomes particularly important in organisations where the data platform extends well beyond Databricks itself.

Many enterprises still operate complex environments involving legacy systems, on-premises infrastructure, and enterprise applications. In these environments, ADF often acts as a broader enterprise integration layer rather than simply a Databricks scheduler.

Similarly, Apache Airflow continues to be relevant in engineering-heavy environments that prefer orchestration-as-code and more platform-neutral workflow orchestration.

Today, many teams first ask:

Can this workload be orchestrated natively within Databricks?

And only introduce external orchestration layers when there is a clear operational or architectural reason to do so.

Final Thoughts

For many years, external orchestration was often treated as a default architectural requirement in Databricks environments. And historically, that approach made complete sense.

ADF provided mature orchestration and enterprise integration capabilities at a time when Databricks itself focused primarily on distributed compute and Spark processing.

But the Databricks platform has evolved significantly since then.

Modern Databricks capabilities increasingly allow teams to ingest data, orchestrate workloads, manage pipelines, govern data assets, and monitor operational workflows directly within the platform itself.

As a result, the architectural assumptions around orchestration are beginning to change.

This does not mean ADF is disappearing. Nor does it mean Apache Airflow no longer has a place in modern architectures.

Both continue to solve important enterprise and engineering problems.

But increasingly, external orchestration is becoming an architectural choice rather than an architectural default.

That distinction matters.

The question many teams are now asking is no longer:

“Which orchestration tool should we place in front of Databricks?”

Instead, the question is increasingly becoming:

“Does this workload require external orchestration at all?”

And for many modern Databricks-centric architectures, the answer may increasingly be: not necessarily.

This is how I currently see the architectural shift happening around orchestration in modern Databricks environments. But I’d genuinely be interested to hear how others are approaching orchestration, especially in enterprise settings where integration, governance, and operational complexity still play a major role.

Informative, Clarity and direct !!! similar to your Udemy videos

Awesome Ramesh. Very clear and interesting!